昨天我們只有大致帶過ResNet的整體架構,內容基本上都是聚焦在殘差(Residual)架構上,今天我們會重新看一次ResNet模型的整體架構,並且利用Pytorch實戰一次看看!

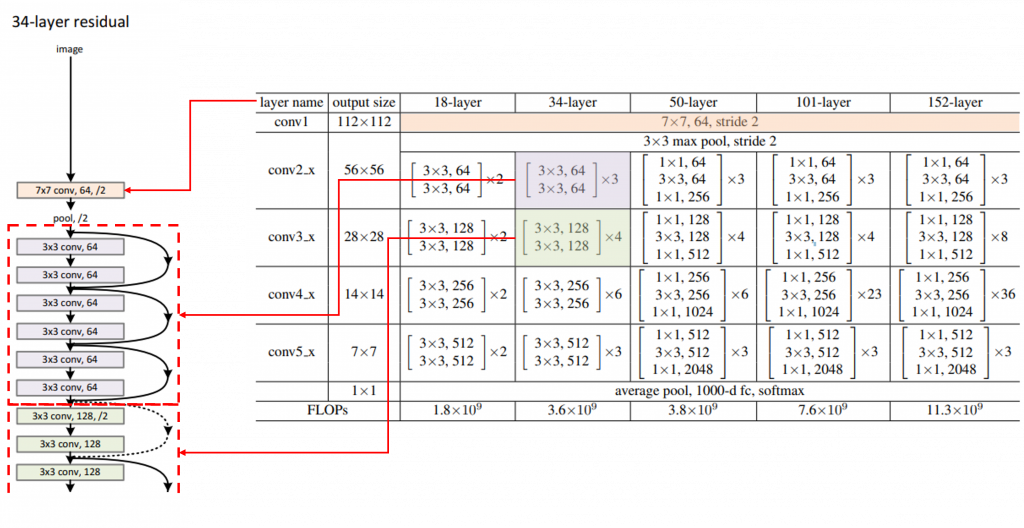

昨天的內容中有提到下面的兩張圖,可是那時候並沒有深入講解圖要怎麼看,現在讓我們重新看一次:

我們以ResNet-34為例,一個Residual Block結構就是表格中中括號框起來的部分,表格中的第一列(淡紫色)區塊對應的就是第一組Residual Block結構,有兩個捲積核大小為3x3的捲積層(輸入與輸出通道數量皆為64),這樣的結構總共會出現3次,接著就是第二列(淡綠色)區塊,對應的是第二組Residual Block結構,有兩個捲積核大小為3x3的捲積層(除了第一次的輸入輸出為(64,128)外,其餘輸入與輸出通道數量皆為128),這樣的結構總共會有4次,往後以此類推。

和VGG實戰一樣(https://ithelp.ithome.com.tw/articles/10332866),我們要建構一個ResNet模型的話,可以使用Pytorch提供的預訓練模型或是自己從頭開始。所以我們一樣分兩個區塊介紹。

import torch

import torch.nn as nn

import torchvision.models as models

# Load a pretrained ResNet-34 model

# pretrained_resnet50 = models.resnet34(pretrained=True)#有使用預訓練權重

pretrained_resnet34 = models.resnet34()#沒有使用預訓練權重

print(pretrained_resnet34)

BasicBlock這個class來實現ResNet中的Residual Block結構,可以看到每個Residual Block中都有兩組捲積層(這邊的捲積層泛指一個一般捲積層+一個批量歸一化層+一個激勵函數),搭配一個短路(shorttcut)連接,最後就是把這樣的殘差(Residual)訊號與短路(shortcut)訊號加在一起,就是一個Residual Block結構的輸出。self.downsample來實現。def _make_layer(self, block, out_channels, num_blocks, stride)按照論文規定的格式依序組合起所有的層。組合好之後就是一個完整的ResNet-34模型。import torch

import torch.nn as nn

# Basic block with two convolutional layers

class BasicBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

self.downsample = nn.Sequential()

if stride != 1 or in_channels != out_channels:

self.downsample = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(out_channels),

)

def forward(self, x):

shortcut = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += shortcut

out = self.relu(out)

return out

# ResNet-34 architecture

class ResNet34(nn.Module):

def __init__(self, num_classes=1000):

super(ResNet34, self).__init__()

self.in_channels = 64

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(BasicBlock, 64, 3, stride=1)

self.layer2 = self._make_layer(BasicBlock, 128, 4, stride=2)

self.layer3 = self._make_layer(BasicBlock, 256, 6, stride=2)

self.layer4 = self._make_layer(BasicBlock, 512, 3, stride=2)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512, num_classes)

def _make_layer(self, block, out_channels, num_blocks, stride):

layers = []

layers.append(block(self.in_channels, out_channels, stride))

self.in_channels = out_channels

for _ in range(1, num_blocks):

layers.append(block(out_channels, out_channels, stride=1))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

# Create a ResNet-34 model from scratch

resnet34 = ResNet34()

# Print the architecture of the custom ResNet-34 model

print(resnet34)

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision

import torchvision.transforms as transforms

# Hyperparameters

batch_size = 64

learning_rate = 0.001

num_epochs = 2

# Data preprocessing and loading

transform = transforms.Compose([transforms.Resize((224,224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

train_dataset = torchvision.datasets.CIFAR10(root='./data', train=True, transform=transform, download=True)

train_loader = torch.utils.data.DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True)

test_dataset = torchvision.datasets.CIFAR10(root='./data', train=False, transform=transform)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset, batch_size=batch_size, shuffle=False)

# Initialize the VGG model

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model = ResNet34(10).to(device) #需要分類幾類就填幾類

# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

# Training loop

total_step = len(train_loader)

for epoch in range(num_epochs):

model.train()

for i, (images, labels) in enumerate(train_loader):

outputs = model(images.to(device))

loss = criterion(outputs, labels.to(device))

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (i + 1) % 100 == 0:

print(f'Epoch [{epoch+1}/{num_epochs}], Step [{i+1}/{total_step}], Loss: {loss.item():.4f}')

# Evaluation

model.eval()

with torch.no_grad():

correct = 0

total = 0

for images, labels in test_loader:

outputs = model(images.to(device))

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels.to(device)).sum().item()

print(f'Test Accuracy: {100 * correct / total}%')